Emporia Research

Unlock high-quality B2B research with verified participants.

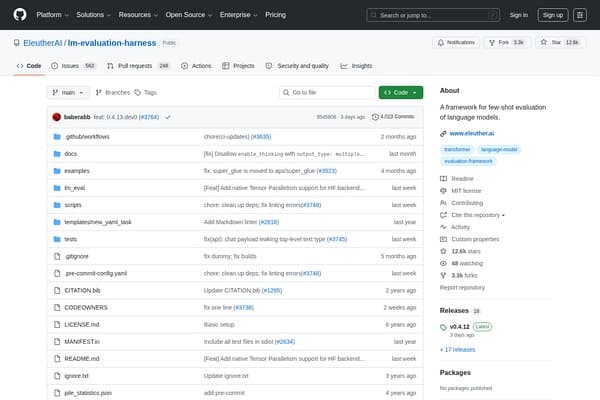

Optimize language model evaluations with ease!

The lm-evaluation-harness is developed by EleutherAI, an organization focused on democratizing AI research and providing robust tools to the community. This framework stands out due to its focus on few-shot evaluation, allowing researchers to test models with minimal data requirements efficiently. The rising complexity of language models necessitates innovative evaluation techniques, and this harness addresses that need head-on. With frequent updates and community contributions, it reflects the collaborative spirit of AI development.

Apart from its core functionality, the harness is designed to be user-friendly. It offers numerous templates and example tasks to help users get started quickly. Additionally, its adaptability means that it can cater to various use cases, from academic research to real-world application testing. By choosing the lm-evaluation-harness, you're not just accessing a tool; you're joining a community committed to improving AI language capabilities.

The lm-evaluation-harness is completely free to use, with no hidden fees or subscription models. Being open-source, users can download and modify the framework as needed.

Pros

Cons

You can evaluate a variety of language models, particularly those built for NLP tasks such as classification, summarization, and translation.

Yes, the framework comes with extensive documentation available on its GitHub page to help users get started and implement evaluations.

Absolutely! The framework allows for customization of evaluation metrics to cater to specific research needs or project goals.

The tool is actively maintained with regular updates coming from the community and contributors, ensuring it remains current with AI advancements.

Yes, there is an active community around EleutherAI's projects, including forums and discussion threads for support and collaboration.